Research on Cultural Heritage and Digital Humanties

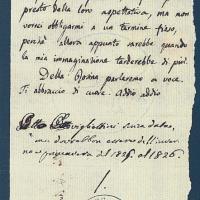

Handwritten Text Recognition on Historical Documents

Handwritten Text Recognition (HTR) aims at automatizing document processing by providing natural language transcriptions of handwritten texts. As such, it plays an important role in automated services, document processing, and Digital Humanities. In this last field, the applications range from the transcription of large document corpora to the analysis of toponyms on ancient maps. Despite Optical Character Recognition (OCR) being a mature and well-established technology, HTR is still a challenging task even when tackled with approaches based on feature learning, especially when it comes to free-layout pages and historical documents.

Visual-Semantic Domain Adaptation in Digital Humanities

While several approaches to bring vision and language together are emerging, none of them has yet addressed the digital humanities domain, which, nevertheless, is a rich source of visual and textual data. To foster research in this direction, we investigate the learning of visual-semantic embeddings for historical document illustrations and data from the digital humanities, devising both supervised and semi-supervised approaches.

Art2Real: Translating Artworks to Photo-Realistic Images

Deep Learning models are usually trained on images captured from the world as it is. This brings to an incompatibility between such methods and digital data from the artistic domain, on which current techniques under-perform. A possible solution is to reduce the domain shift at the pixel level, thus translating artistic images to realistic copies.

We developed a model capable of translating paintings to photo-realistic images, trained without paired examples. The idea is to enforce a patch level similarity between real and generated images, aiming to reproduce photo-realistic details from a memory bank of real images. This is subsequently adopted in the context of an unpaired image-to-image translation framework, mapping each image from one distribution to a new one belonging to the other distribution.

Layout and content analysis in Digitized Books

Automatic layout analysis has proven to be extremely important in the process of digitization of large amounts of documents. We developed a complete pipeline for layout analysis and content classification, introducing a SVM-aided layout segmentation process. The final output of the automatic analysis algorithm is a complete and structured annotation in JSON format, containing the digitalized text as well as all the references to the illustrations of the input page, and which can be used by visualization interfaces as well as annotation interfaces.

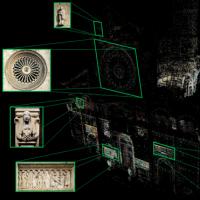

Egocentric Video Registration and Architectural Details Retrieval

Wearable devices and first person camera views are key to enhance tourists' cultural experiences in unconstrained outdoor environments. In these works we mainly focus on two topics: video registration and architectural details retrieval. The former is the task of precisely localizing the user (the 6 degrees-of-freedom of the camera he wears) with respect to a world reference system, while the latter deals with the unsupervised retrieval of the relevant details that cultural sites present.

EgoVision and Human Augmentation for Cultural Heritage

Augmented Reality and Humanity present the opportunity for more customization of the museum experience, such as new varieties of self-guided tours or real-time translation of interpretive. At the end of this year several companies will release wearable computers with a head-mounted display (such as Google or Vuzix). We would like to investigate the usage of these devices for Cultural Heritage applications.