Human-Robot Interaction

Collaborative robots, or cobots, have entered the automation market for several years now, achieving a rather rapid and wide diffusion. The Human-Robot Interaction (HRI) and Human-Robot Collaboration (HRC) paradigms still have unexplored potential and challenges not yet fully investigated and solved.

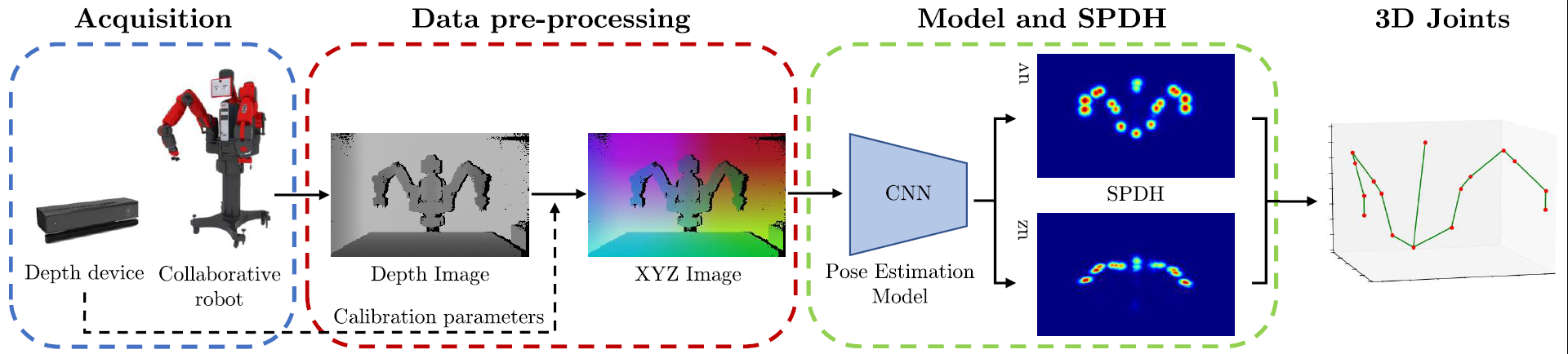

Among others, the knowledge of the instantaneous pose of robots and humans in a shared workspace is a key element to set up an effective and fruitful collaboration. The pose estimation problem opens up to new applications, ranging from solutions for the safety of the interaction to behavior analysis.

Despite robots usually provide their encoder status through dedicated communication channels, enabling the use of forward kinematics, an external method is desirable in certain cases where the robot controller is not accessible. In particular, aiming to have an end-to-end system which predicts the pose of both robots and humans, a method based on an external camera view of a collaborative scene is more suitable.

Publications

| 1 |

Simoni, A.; Borghi, G.; Garattoni, L.; Francesca, G.; Vezzani, R.

"D-SPDH: Improving 3D Robot Pose Estimation in Sim2Real Scenario via Depth Data"

IEEE ACCESS,

vol. 12,

pp. 166660

-166673

,

2024

| DOI: 10.1109/ACCESS.2024.3492812

Journal

|

| 2 |

Simoni, Alessandro; Pini, Stefano; Borghi, Guido; Vezzani, Roberto

"Semi-Perspective Decoupled Heatmaps for 3D Robot Pose Estimation from Depth Maps"

IEEE ROBOTICS AND AUTOMATION LETTERS,

vol. 7,

pp. 11569

-11576

,

2022

| DOI: 10.1109/LRA.2022.3193225

Journal

|